🔎 Focus: Technical SEO

🔴 Impact: High

🟠 Difficulty: Mid-High

Sponsored By Ahrefs

Stop wondering if AI is talking about your brand. This post is brought to you by Ahrefs, the leading SEO & marketing intelligence platform.

With their new Brand Radar tool, you can finally monitor your visibility across major AI engines: from ChatGPT to Google’s AI Overviews. Secure your brand as the top choice in the era of AI.

Dear Tech SEO 👋

Let’s talk today about Structured Data and LLMs (AI).

Structured data to prevent AI hallucination

Most of us use Structured Data to get "stars in the SERPs". And do not get me wrong, that indeed helps CTR metrics go up. But we all know rankings are not the only field to play SEO.

For AI systems (our beloved AI chatbots), structured data is not only for fancy snippets but the best, most reliable and most affordable way to retrieve information.

LLMs work on tokens, and “unstructured” information just takes more computing power to process accurately. Structured data is the path to allow AI systems to hallucinate less (or not at all). In reality, no system needs structured data to function, systems need structure data to function accurately.

It turns out that AI systems process with wider windows of tokens pages that have implemented well structured information (more if this later).

While I was reading the GraphRAG Documentation of Google (a method to build an AI Agentic System) they literally state:

Prompts that are augmented using GraphRAG can generate more detailed and relevant AI responses.

LLMs will buy products on our behalf soon. Without structured data that could get quite messy. Today I will show you how important is to build a structured-data-first SEO strategy for AI Agents.

Ready? Let’s goooooooo

Group Your Structured Data Per Entity

If your site (on Shopify, Next.js, Wordpress, etc) contains separate blocks for Product, Review, and Organization, there is your first point to improve. For example, Gemini’s reasoning engine uses GraphRAG (Graph-based Retrieval-Augmented Generation).

If there are separate entities explicitly associated, then AI will "guess" if the Review belongs to the Product or if the Brand is a trusted Organization. That costs just more money. AI needs a single, connected graph.

How To Upgrade: Move from separate scripts to a Nested @graph Array. This uses @id as a "semantic anchor" to link your catalog data into one relational source of truth (using IDs).

Example:

🤦♂ Separate scripts for Brand and Product.

The AI has to guess the relationship.

<script type="application/ld+json">{"@type": "Organization", "name": "TechGear"}</script>

<script type="application/ld+json">{"@type": "Product", "name": "Pro Sensor V3"}</script>

✅ The Agentic Way: Nested @graph Array:

One script, all nested easy to process. We use @id to tell Gemini: "This specific Organization is the Brand behind this specific Product."

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Organization",

"@id": "https://yourstore.com/#org",

"name": "TechGear HQ",

"url": "https://yourstore.com",

"sameAs": ["https://www.wikidata.org/wiki/Q12345"]

},

{

"@type": "Product",

"@id": "https://yourstore.com/pro-sensor-v3#product",

"name": "Pro Sensor V3",

"brand": { "@id": "https://yourstore.com/#org" },

"offers": {

"@type": "Offer",

"price": "299.00",

"availability": "https://schema.org/InStock"

}

}

]

}

@graph?

You are probably not that familiar to @graph (just like me) but that is just the node that allows to optimize all your schema towards a relational structured data. In the example above, the nested entities, the @graph + @id is what holds @organization and @product together.

Why use #org or #product in the ID?

This is a Fragment Identifier. It tells the AI that the entity is a specific "node" on page. When Gemini "cites" your product, ‘knows’ it belongs to your “organization“ its internal knowledge graph.

Structured Data Gives You A Wider AI-Response Room

A research by Andrea Volponi on LLM retrieval found out that AI agents have a "confidence threshold" > Word Limitation > Wordlim determined by whether the crawled page has structured data or not.

If your data is messy, the AI creates a short, 200-word summary to avoid mistakes.

If your data is in a clean

@graph, the AI treats it as a "High-Confidence" source and can grant you a visibility quota of 500+ words.

Without proper context, the AI systems will hyper summarize your content. With proper structured data you literally are buying wider response room.

DIY Structured Data Test

Let’s do an experiment.

Will AI retrieve my structured data?

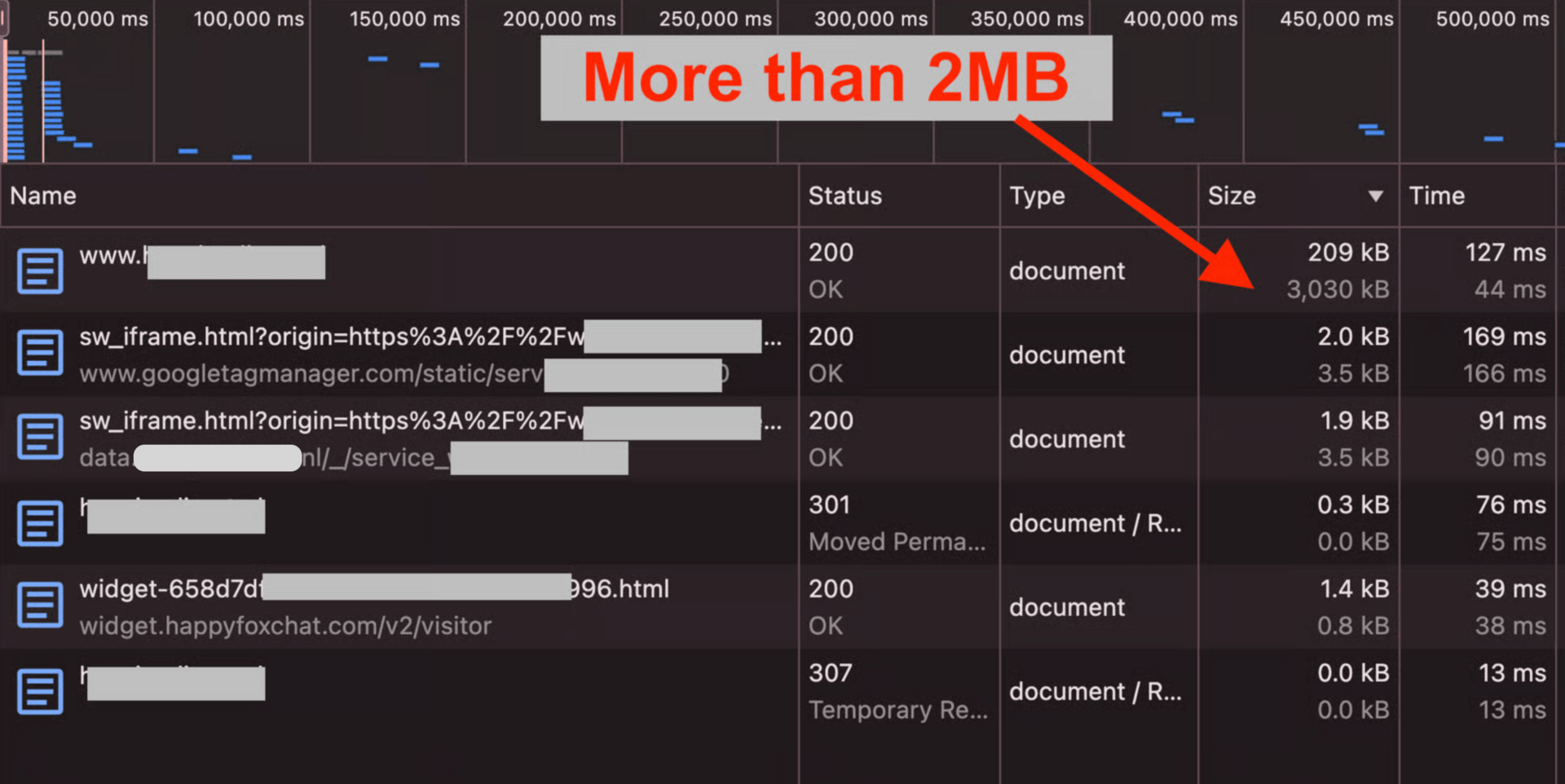

I wrote back in February that the limitation of 2MB on Google fetching for indexing might be even worse because of rendering limitations. Now I will be showing how the 2MB limitation affects negatively to the retrieval of Structured Data.

I took a web page that is well above that 2MB limitation:

Pages with an HTML above 2MB might lose structured data being retrieved by LLMs

I saved the HTML on my laptop to analyze it properly.

The page has a small Schema snippet in line 18337, almost at the end (no wonder the page being +3MB size).

The Schema data should not be hidden at the bottom of the HTML

I set the page in the https://validator.schema.org/ to see what pops out. This was the first failure: the tool could not even process the HTML as it passes its 2,5MB limit.

🗣 Translation from Spanish: “Are you crazy!? I cannot process such a huge azz file!“

Schema Validator tool cannot process files larger than 2.5MB

My plan B was the Enriched Results Test of Google. Luckily this one triggered some of the JSON-LD scripts within the HTML:

The tool only could spot 2 out 3 JSON-LD scripts present in the HTML. Those scripts were place a lot earlier in the HTML (the scripts started on line 948):

2 out of 3 Schema scripts were retrieved. In this case, “Webpage“ was not possible to be included in the retrieval of the Schema (that script was way below in the HTML)

Nota bene [10th May 2026]: further research leads me to mention “webPage“ is not longer reported on the enriched results. The fact that “WebPage“ is not appearing here is not related to the fact the page is freaking 3MB heavy. I will test tho if the supported data (organization and reviews) are indeed reported on such heavy page and positioned at the bottom of the HTML.

Quick Checklist to upgrade to LLM-friendly JSON-LD

What | How |

|---|---|

Nesting | Combine separate JSON-LD scripts into a single |

Anchoring | Use |

Referencing | Don't repeat data. If the Product has a Brand, point to the Brand's |

Prioritizing | Move the script to the top, as early as possible in the HTML to ensure it’s parsed first. |

Structured Data For Agentic Commerce

We are moving from "Search" to "Action." The Agentic Commerce Protocol (ACP) is now the standard for AI agents to execute purchases. To be "Agent-Eligible," your technical stack must move beyond basic price/availability.

Gemini now prioritizes these three "Reasoning Properties" to decide if your product is worth recommending. Now we can thing of implementing non-so-common Schema entities to allow LLMs to build a wider context on our data, for example:

knowsAbout: Maps your brand to a Wikidata Q-ID (e.g., "Sustainable Materials").handling_cutoff_time: Essential for the "I need it by Friday" reasoning path.isRelatedTo: Defines the compatibility graph (essential for "Best accessories for X" queries).

Do you want me to check if your Structured Data is set to win AI search?

Reply AI Schema and your domain name and I will check you Schema.

My Top Picks

That’s it for today :)

Until next time 👋

—