🔎 Focus: Crawling & Rendering

🔴 Impact: High (Content Invisibility)

🔴 Difficulty: High

Sponsored By Ahrefs

Stop wondering if AI is talking about your brand. This post is brought to you by Ahrefs, the leading SEO & marketing intelligence platform.

With their new Brand Radar tool, you can finally monitor your visibility across major AI engines: from ChatGPT to Google’s AI Overviews. Secure your brand as the top choice in the era of AI.

Dear Tech SEO 👋

Google is getting environmentally friendly has to save some money and it is cutting down crawling resources quite heavily… This cut affects most specifically Googlebot which is the default set of crawlers serving Google search engine results.

Googlebot ain’t the only crawler

Googlebot: (mobile and desktop) max. 2MB of a supported file like HTML

Googlebot PDF limit: 64MB

Google Crawlers: (any type of crawler beyond search) max. 15MB.

Most of us focused only on the fact that the cut happens because of the file size but we are ignoring a more critical issue: time to render/execute that file.

In short, crawling & fetching limits are related to the size of the file while rendering is limited by the time it takes to execute (or render) it.

I did some tests and found out:

Heavy HTMLs larger than 2MB ain’t that rare

Super light JS files blocked entire HTML sections

This means, the effective content cutoff happens below 2MB fetching limit.

1 infographic speaks a thousand tokens:

Difference between Crawling and Rendering

Source: Google (with my own tweaks)

Let’s have a look at the experiment I did.

Ready? Let’s go..

Test 1 - Are your pages above 2MB?

When Googlebot crawls and fetches a resource (a web page) it will first take the core HTML and then will crawl and fetch all the referenced files (JS, CSS, etc) separately. Each of these calls will be limited by the 2MB rule (except PDFs).

According to the Web Alamanc the 50% percentile of pages on mobile are below 2MB…

But in the world of web I do not like to take average/median because with over billion pages worldwide (and for sure over a trillion single pages), even a 1% is a huge number of cases.

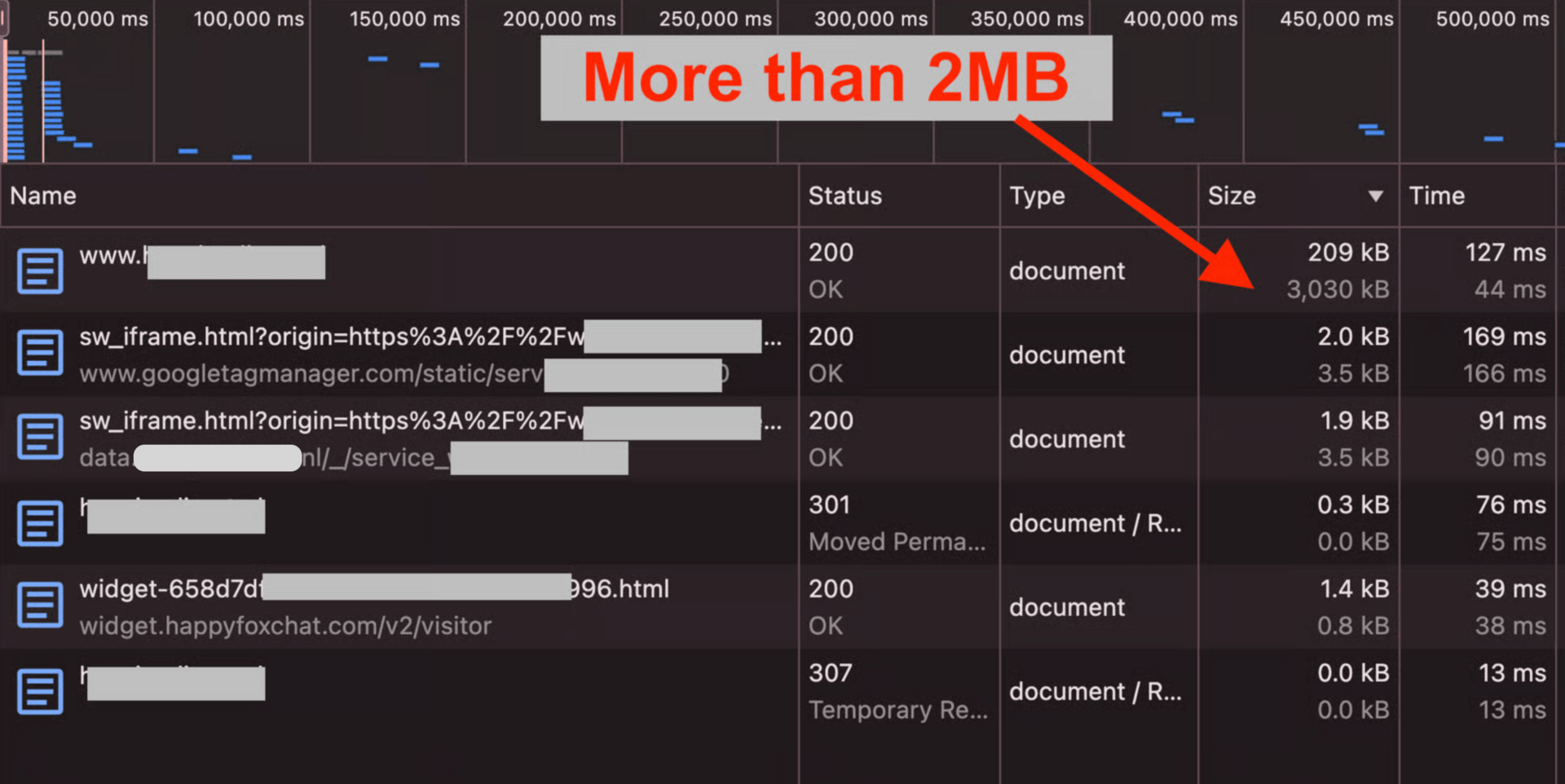

Example: Page Above 2MB - Do It At Home

So I tested how difficult would be for me to find a page over the 2MB limit. Here we go:

I Googled “buy phone case“

I opened 5 different top results

One homepage was already around 3MB

Many of the top pages were above 1.5MB

Majority of pages were quite light (under 0.1MB)

I am talking about single-document sizes, not all resources a once:

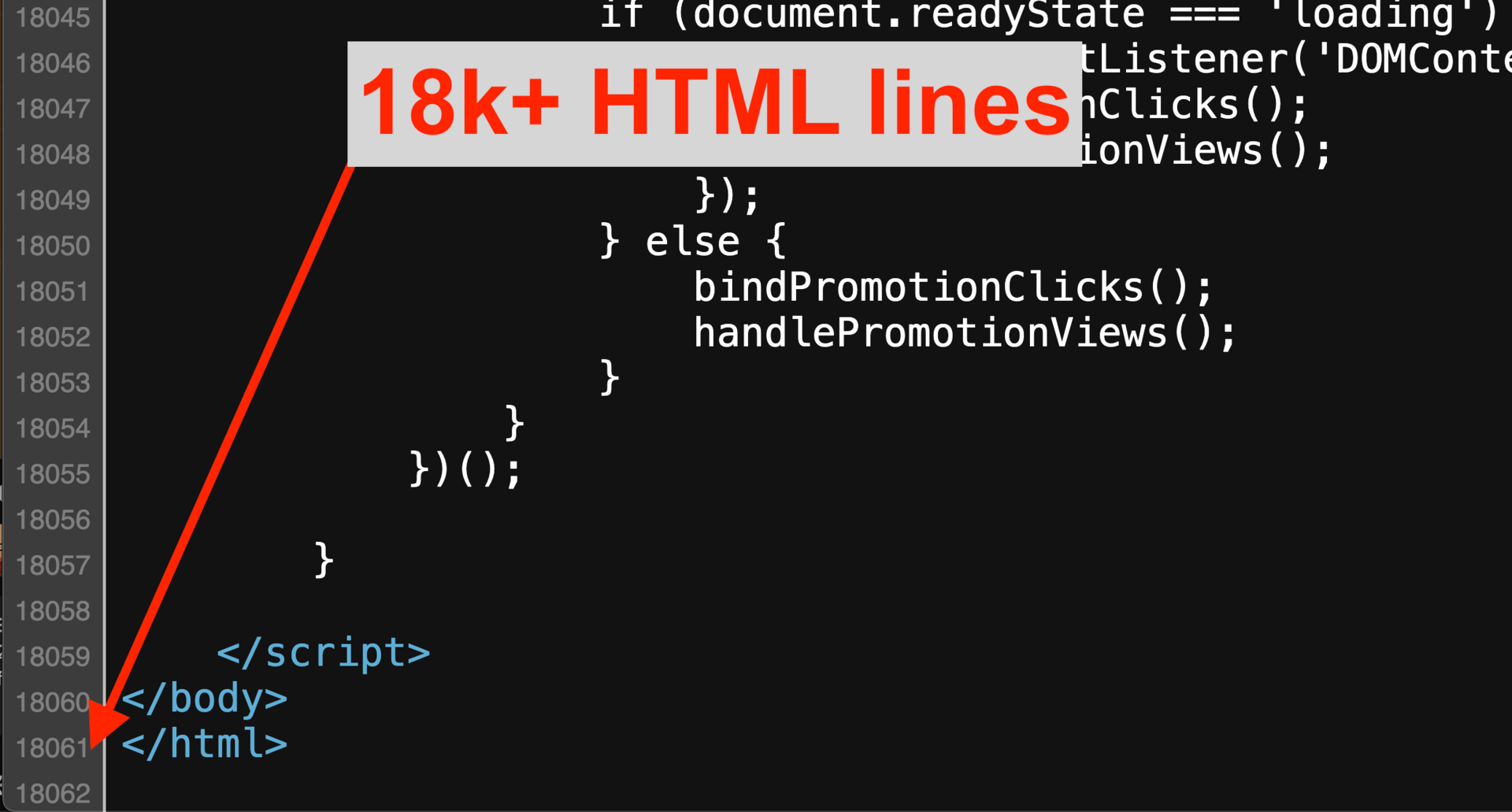

And indeed this homepage was gigantic: 18k+ HTML lines

Large HTML files can easily go above 2MB

This is happening to big names too. Look at this Amazon internal search page:

The HTML size is above 1.5MB

Amazon pages go close to the 2MB crawling & fetching limit

It was not rare AT ALL for me to Google a bit and find examples of pages with a size around 1.5MB…

Can you imagine Google cutting again their crawling limit down to 1MB? or even 0.5MB?

They just cut it from 15MB down to 2MB. From 2MB to 1MB looks like no even a big jump. Let’s hope for the best.

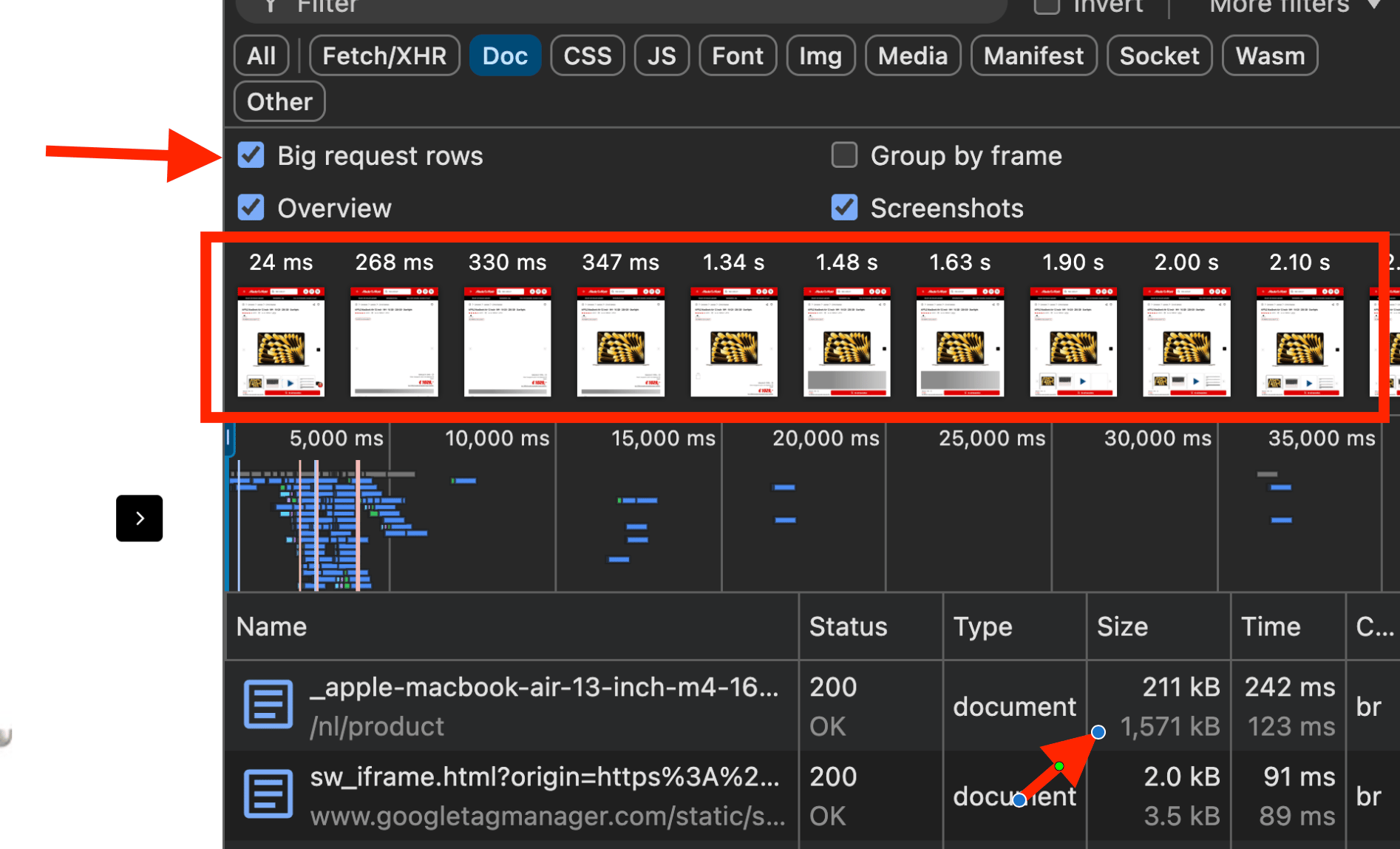

How to check if your pages/resources are above 2MB

Try if your pages are above 2MB (uncompressed):

Go to the Developer Tools of Chrome

Go to Network > Filter

Select “Doc“ (or any other file type)

Check “Big request rows“

For each result, look at the lowest number (uncompressed file size)

How to verify if your page sits above 2MB

Now that we know large HTMLs (above 1.5MB) are not that rare to find, let’s focus on a problem that is way worse: blocked rendering because of SLOW execution times.

Test 2 - Rendering Gets Blocked Way Before 2MB

If the page HTML gets cut off because is larger than 2MB this could happen (among other things):

On-page content will not be indexed

JS calls won’t be executed

And that is really. It all comes down to content visibility. Your website will keep functioning correctly, yet heavy HTML = bad performance = low CWV = lower rankings.

Here is the real issue I am dealing with my whole SEO life:

JS (javascript)-rendered content being invisible (not indexed) weather all my page resources fall under 2MB

JS rendered content is a gamble, Google might render it or it might not…

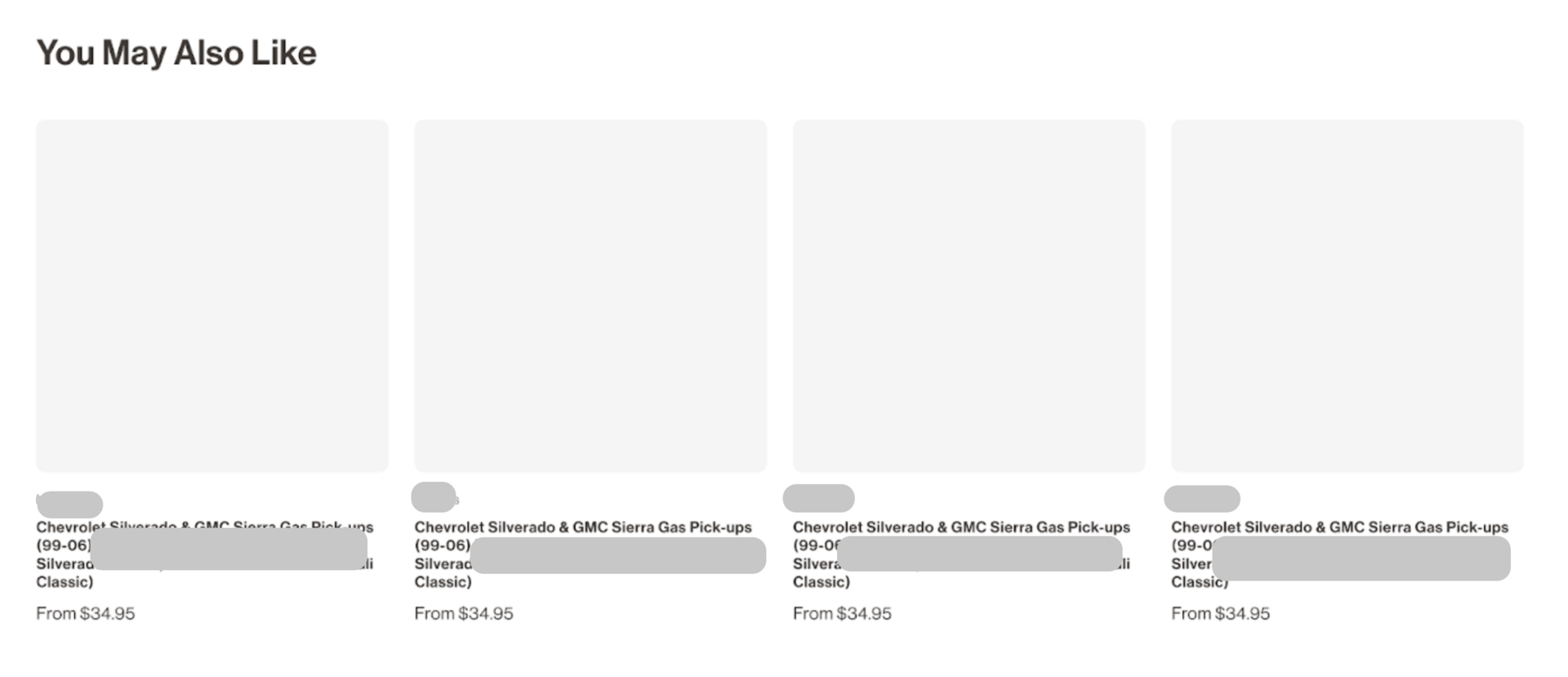

Here is an example of a client using a “recommended products“ block that depends on Javascript to be rendered:

Empty HTML section indexed by Google

I analyzed the page in the Google Search Console > “View Crawled Page“. There I saw what Google picks up for the index: empty HTML section.

The major problem: it affects internal linking.

I love those automated blocks for internal linking, they build a fantastic structure are scale… if they actually work.

For this specific case, NONE of the resources (HTML, JS, CSS, Media, PDFs, etc) are larger 2MB yet they content does not get fully indexed.

The JS rendering issue does not only affect the content (text) but the actual site structure. And it affect the entire website. All of this happening way below the 2MB crawling & fetching (for search) limit.

The real problem then is not the new crawling file size limitation (even thought it could affect millions of website); the real problem is that your website keep being badly indexed because of long execution/rendering times.

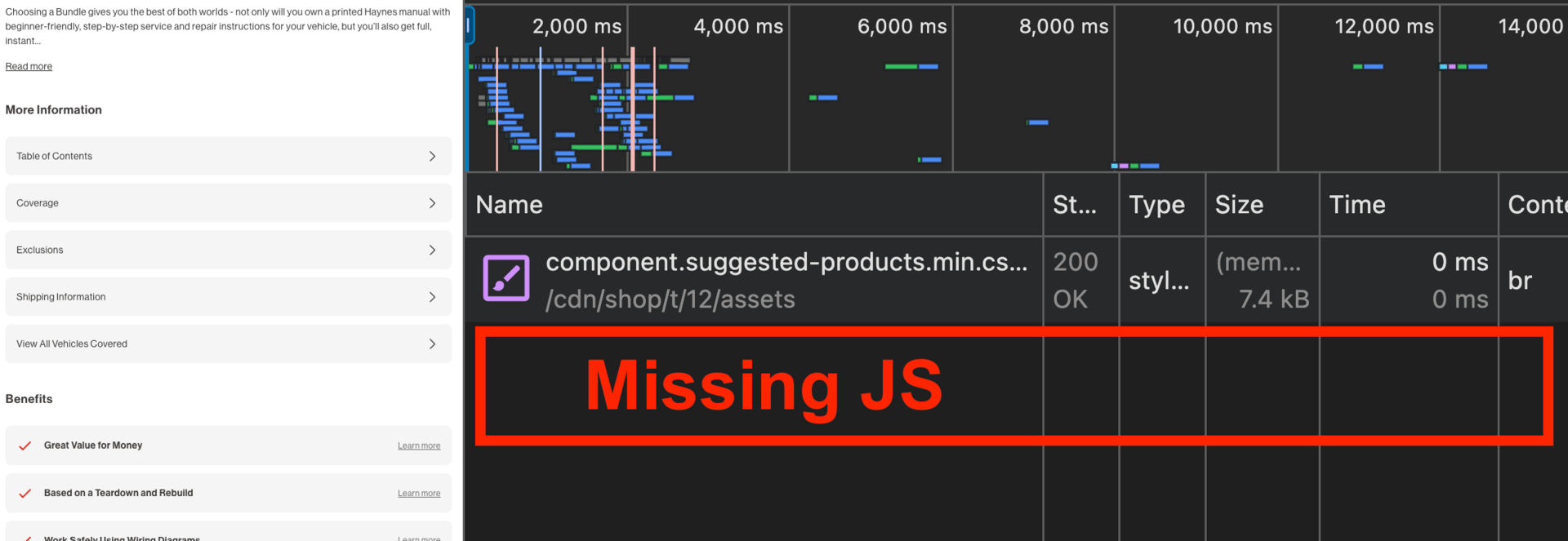

Another page from the very same website with barely no differences on the code base does get rendered properly including the “recommended products“ JS-rendered section:

Full JS-rendered HTML section indexed by Google

(Working properly)

How to identify the rendering issue

How comes my valuable HTML section with automated JS internal links sometimes works and sometimes does not?

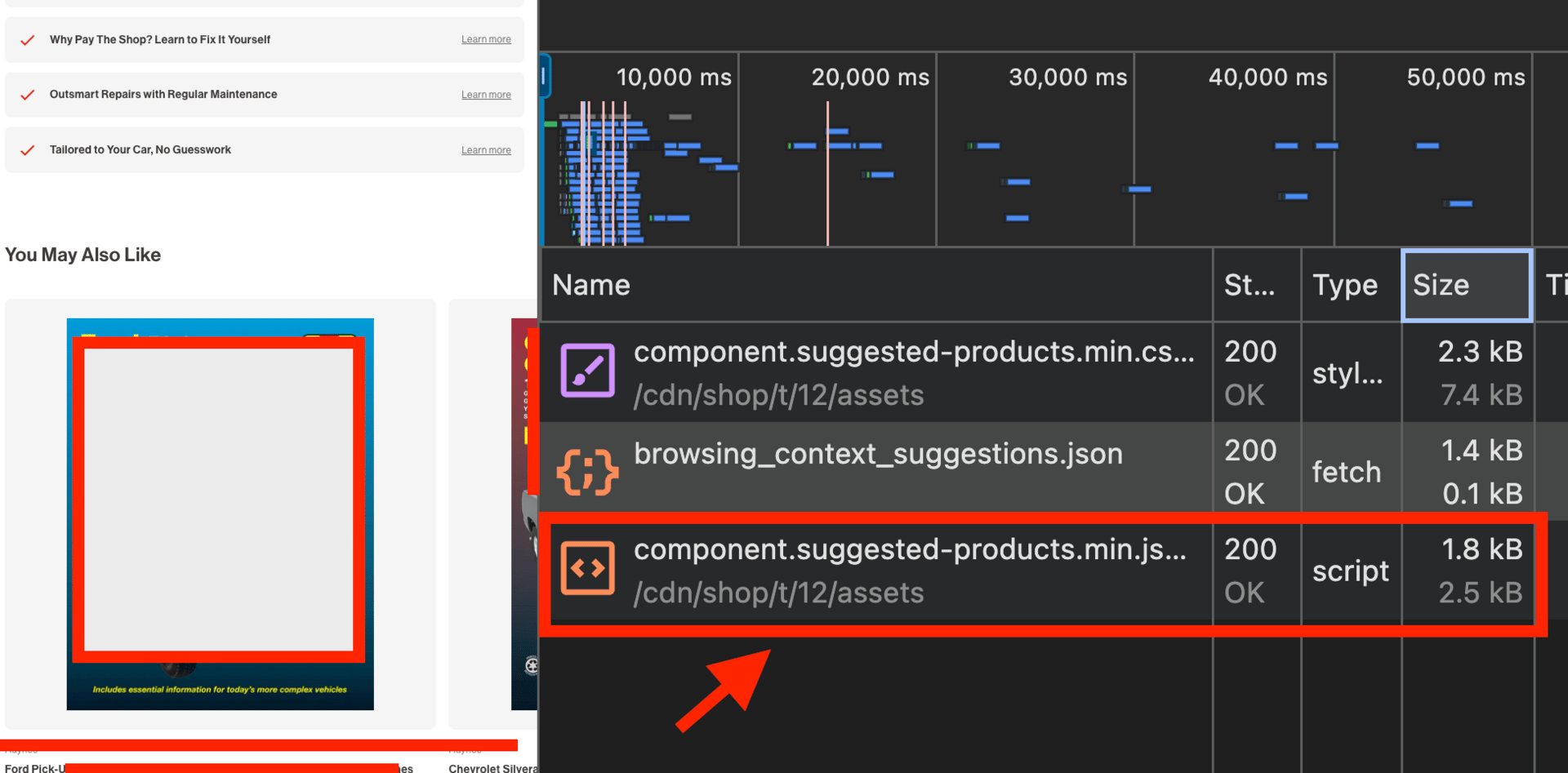

Let’s identify the Javascript file that triggers that section:

It is a “suggested products“ functionality so I bet the script had a name related with “suggestions“. I got it:

Javascript-rendered content can be indexed just fine by Google as long as the timing and size.

It happens that the HTML block gets triggered in the first fold. Which is exactly the issue for the same HTML section in the page where it is not rendered.

Here is how the same JS script is missing from the page with rendering issues:

This looks a well-intended “lazy load“ but that creates a critical issue for the entire site: there are thousands of internal links that are not taking effect.

The JS is 2.5KB only, around 1000 times smaller than the limit. Yet, regardless this fact the missing content piece still not rendered for search results.

Check your files, script timing, rendering and sizes y’all!

Does the 2MB file limit matter at all?

Let’s take that website that had a 3MB page (the homepage). Its performance has a constant decay for a couple of years straight:

Bad performing website

Is this only because of the 3MB issue? Probably not.

But here is how I see it:

Bad technical performance is a symptom of a bad SEO strategy.

If you have little care to bother improving your response sizes, crawling, rendering and indexing issues then you are set to failure.

The more your business grows the more relevant Technical SEO becomes.

Reply to this email with “Technical SEO“ if you learned something new 🙂

Note: if you reply to this email it also helps emails systems to understand I’m legit and not spam.

Recommended Reads

🔎 Reddit 2MB Thread

[Reddit]

🔎 Googlebot File Limits Explained

[Barry Schwartz - Search Engine Land]

🔎 Why The 2MB Is Enough

[Roger Montti - Search Engine Journal]

🔎 Are All Crawlers “Search“ Crawlers

[John Mueller on Bluesky]

🔎 Official Documentation On The New 2MB Limit

[Google Search Central Documentation]

🔎 Should You Care About The 2MB Limit

[Nikki Pilkington - Blog]

🔎 Google Crawlers Documentation

[Google Documentation - Crawling Infraastructure]

🔎 Check If Your Page Goes Beyond 2MB

[Aleyda Solis on LinkedIn]

Until next time 👋

—