🔎 Focus: Technical SEO

🔴 Impact: High

🟠 Difficulty: Mid

Sponsored By Peec AI

AI search is the fastest-growing discovery channel. Your customers ask ChatGPT, Perplexity, Claude, and Gemini for recommendations daily.

Is your brand the answer?

Peec AI shows you exactly where you stand:

Track your visibility and sentiment across all major LLMs

Benchmark against competitors to see your share of voice

Get step-by-step guidance on improving your AI visibility

Turn AI search from a black box into a measurable growth channel with clear metrics and a scalable strategy.

Dear Tech SEO 👋

Is not you, it’s… technical

I remember when I received a desperate email asking for help: “We do not know what is happening, everything tanked too fast. What could it be?“.

There are 2 situations where traffic and revenue drop within matter of days:

Google Core Updates

Technical SEO Issues

When I got to check their Search Console I immediately knew their Technical SEO was broken.

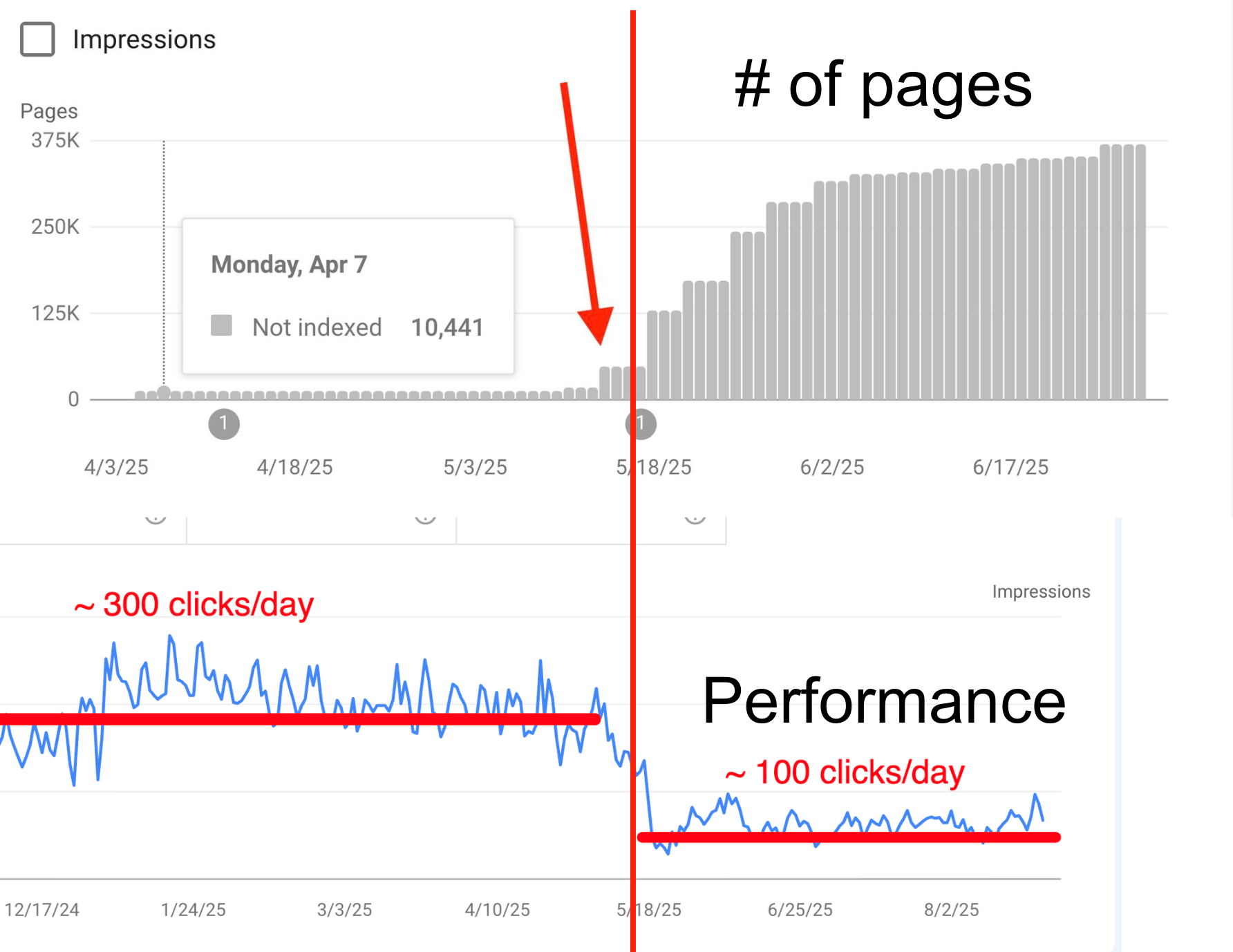

Here an example we are recovering from:

A website increased the amount of pages by x4000 (yes, four thousand) because of an update on the filtering functionality of the site.

Technical SEO issues affect performance pretty rapidly

Today, I bring you the 5 most common issues I encounter and is killing your revenue.

Ready? Let’s goooooooo

1. The JavaScript Rendering Gamble

Google can render JavaScript.

But “can” doesn’t mean “will.”

Most SEOs worry about hidden text. I worry about hidden links.

Example of when rendering is blocking crawlability:

Javascript rendering can block links therefore crawlability

The "Maybe" Factor: Googlebot prioritizes raw HTML. Rendering JS is resource-heavy; it doesn’t always happen for every page or every crawl.

Discovery Bottlenecks: If your e-commerce collection page requires JS to load product links, Google may see an empty page. If it can't see the link, the product doesn't exist. “Link juice” gets dry on your site.

No product discoverability > lower transactions > lower revenue

2. The Infinite Scroll - No Thanks

Infinite scroll is great for users, but Googlebot does not scroll. If your "Load More" button is JS-only, everything below the first fold is invisible to search engines.

The bot view port (the length of the screen) can be long be not enough.

Pagination triggered by Javascript won’t allow crawler to discover those pages

Products are not reachable: If a bot can't click a link to "Page 2," your products don't exist.

No product discoverability > lower transactions > lower revenue

Filter combinations (Color + Size + Material) can create thousands of unique URLs. If these are standard <a href> links, Google will try to crawl every single one.

I am working on a faceted navigation issue that went from zero to over 400k pages in matter of weeks.

Spam Label: such exponential growth in matter of days indicates low site quality potential spam.

Diluting Ranking Power: When Google crawls 10,000 "junk" filter pages for every 1 "money" page, your core category pages lose authority and rank lower.

Indexing Delay: New products and relevant pages are hidden under a bunch of rubbish as the crawler is stuck in a loop of endless filter combinations.

Decrease of 40% of the revenue was lost in matter of days; months without recovery.

4. Shopify’s Duplicate Landings

One product, two URLs. This is a classic Shopify issue we encounter way too often.

A massive "crawl bloat."

The Problem:

Shopify often generates product links that include the collection path. This results in two URLs for the exact same item:

The Duplicate:

.../collections/diapering/products/diapersThe Primary:

.../products/diapers

Even if you use a canonical tag to point to the primary page, you are still forcing Googlebot to crawl two URLs to find one piece of content.

On large stores, this effectively doubles your crawl budget usage for no reason.

Diluted Authority: Internal link equity is split between two versions of the same page + excessive canonicalization (if done well).

Indexing Delays: Google spends its time crawling duplicates instead of finding your new arrivals or seasonal updates.

Wasted crawl budget > slower indexing of new arrivals > missed revenue.

5. Keyword Cannibalization

Sometimes, a "long-tail" strategy goes too far. When you create separate pages for near-identical search intents, you aren’t dominating the SERP. You’re forcing your own pages to fight each other.

Cannibalization example

Over-segmenting (e.g., creating one page for "best blue diapers" and another for "top diapers in blue"), you "eat" your own intent. Google gets confused about which page to rank, often resulting in both pages sitting on page two instead of one page ranking at the top.

Split authority > diluted rankings > lower rankings (less AI citations)

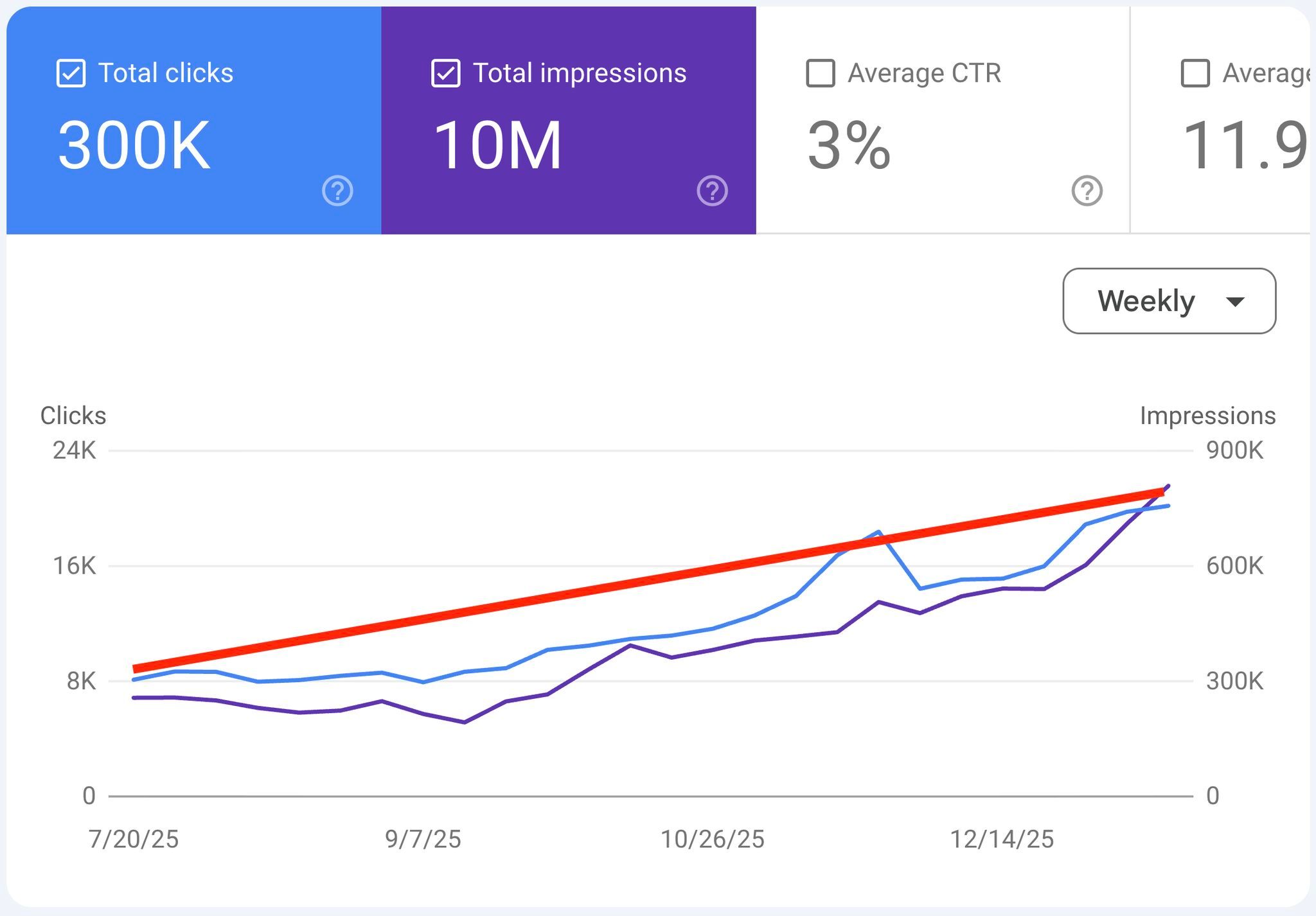

I fixed these issues and grew revenue

From this particular case, the owner (Shopify store) reported +96 revenue per month from the US (region they were looking to grow).

Reply with your domain name, if you want me to check if you need to fix Technical issues and how.

That’s it for today.

Hear Me Speak

I am speaking at CAMPIXX 2026 in Berlin. I will be presenting about Technical SEO of course, more details will be shared soon.

I got a 10% discount code for you: HRMSPK2026

You joining?

Get your tickets here https://www.campixx.de/campixx-en/

Read you next time 👋

—