🔎 Focus: Technical SEO

🔴 Impact: High

🟠 Difficulty: Mid-High

Sponsored By Ahrefs

Stop wondering if AI is talking about your brand. This post is brought to you by Ahrefs, the leading SEO & marketing intelligence platform.

With their new Brand Radar tool, you can finally monitor your visibility across major AI engines: from ChatGPT to Google’s AI Overviews. Secure your brand as the top choice in the era of AI.

Dear Tech SEO 👋

Traffic still grows, especially in eCommerce. In the end the internet is the major market in the world… Today I will share a case study among the last ones we are working on where traffic is a growing asset.

Full 30-min over-the-shoulder video

eCommerce - Tech SEO Case Study

Case | CMS | Size |

|---|---|---|

Bookstore - eCommece | Shopify | Close to 1M pages |

1 - Removal and block of thousands of filter pages

While analyzing the Google Search Console reports, we found out that a significant portion of the excluded pages from the index are filtered pages (pages that filter a set of products).

The filters basically trigger a new page, with all of them combined we got a serious crawling and indexing issue:

Lower website quality

Canonicalized pages

Duplicated pages

Low value pages

The problem looks like this (from another case we are working on):

All filters become links, therefore pages contaminating the site

The filtering options are mostly not indexed as they have no added value, and they don’t bring any traffic to the site. That’s why we should just block them from crawling.

Filtered collection pages should be marked with a noindex,follow meta robots tag: <meta name='robots' content=”noindex, follow” />

Why “noindex, follow“? Because last thing you want is to create a hole in the crawling, which is the bot arrives into a pages where they cannot follow any further links. Like a dead end.

You could also implement a robots.txt blocker (Disallow: p.filter, for example) to avoid wasting crawling resources, but only do it as long as the index has been cleared. This one is tricky.

To avoid crawlers actually following those links try with Javascript hiding the filters all together or making then as a form feature instead of an actual link.

2 - Remove JS rendering from crucial content

A fundamental SEO problem is when the content does not exist on the page unless the user clicks “Show more“. Imagine 800k product pages missing crucial information about the product itself. For this case study, the product content was not available at all for bots.

Easy fix:

Make that content available (server-side rendered) on the first HTML load.

Due to UX constrains you might have to leave “Show more“ with the rest of the text invisible. That is “ok” to a certain point, as long as the actual text is loaded in the HTML, Google will be able to find it (tested and it works). Where possible always show all your content, avoid hiding “Read more“ features…

Hidden content below “show more“ features can prevent your content to rank

3 - Adding bulk internal linking without JS

Main problem in this website was lack of internal links.

Websites have a semantic value given by its site structure. The site structure is built with internal links (contextual, navigation, breadcrumbs, relates pages, etc).

Anything from related product, related collections, related posts enhance crawlability and discoverability of content AND the semantic value of the website overall.

You have to ensure those blocks are:

Dynamic based on each page (meaning the have to be difference based on the current content)

Rendered on the server

Automated

Automated internal links help to grow semantic value of a website

Automated internal links is a method I use often to increase the number of internal links logged by the Google Search Console. The internal links log is like the pixels of an image, the more pixels the more clear and neat is the image. I like the internal linking ratio of 100:1, meaning that a website with 1M pages should be logging 100M internal links. I have not a specific scientific approach to that ratio, just that every time I worked on a successul project, the internal links ratio was around that number. A ratio 10:1 works also well :)

Internal Links is essential for high SEO performance

4 - Enabling pagination

The pagination on this site was implemented the wrong way. Only a “load more“ button that worked with Javascript.

Pagination helps product discovery: bots go to each page and crawl all the products in it. Without pagination you only allow a small percentage of your products to be available for indexing. Any product after page 1 was literally invisible.

💁♂ Advanced Tech SEO Tip: Implement pagination with a noindex,follow robots tag. Any page after the first page should be “noindex“: sub-pages won’t be ranked but will serve to pass link equity. To know more check Patryk’s Wawok Pagination Indexation.

Bad pagination implementation can hurt crawlability

How implement pagination optimized for SEO (the simplest way):

Make sure pagination contains

<a href>linksUse self-referencing canonicals

Further paginated pages should NOT be indexable (noindex, follow)

Pagination can get quite complex once the sub-pages hit the index, more of that in another edition…

5 - Enhancing collection structure

The site structure should reflect the search demand. Collection pages of an eCommerce have to match the transactional intent. Often I see, many websites missing rankings because they do not match they landings with the actual search demand.

I usually go to keywordinsights.ai

> upload my 1000s of keywords

> let the tool cluster per intent

> translate each cluster into a (collection) page

Keyword Clustering for Collection Pages

Then I set the relevant keyword clusters into a content plan and hit execute:

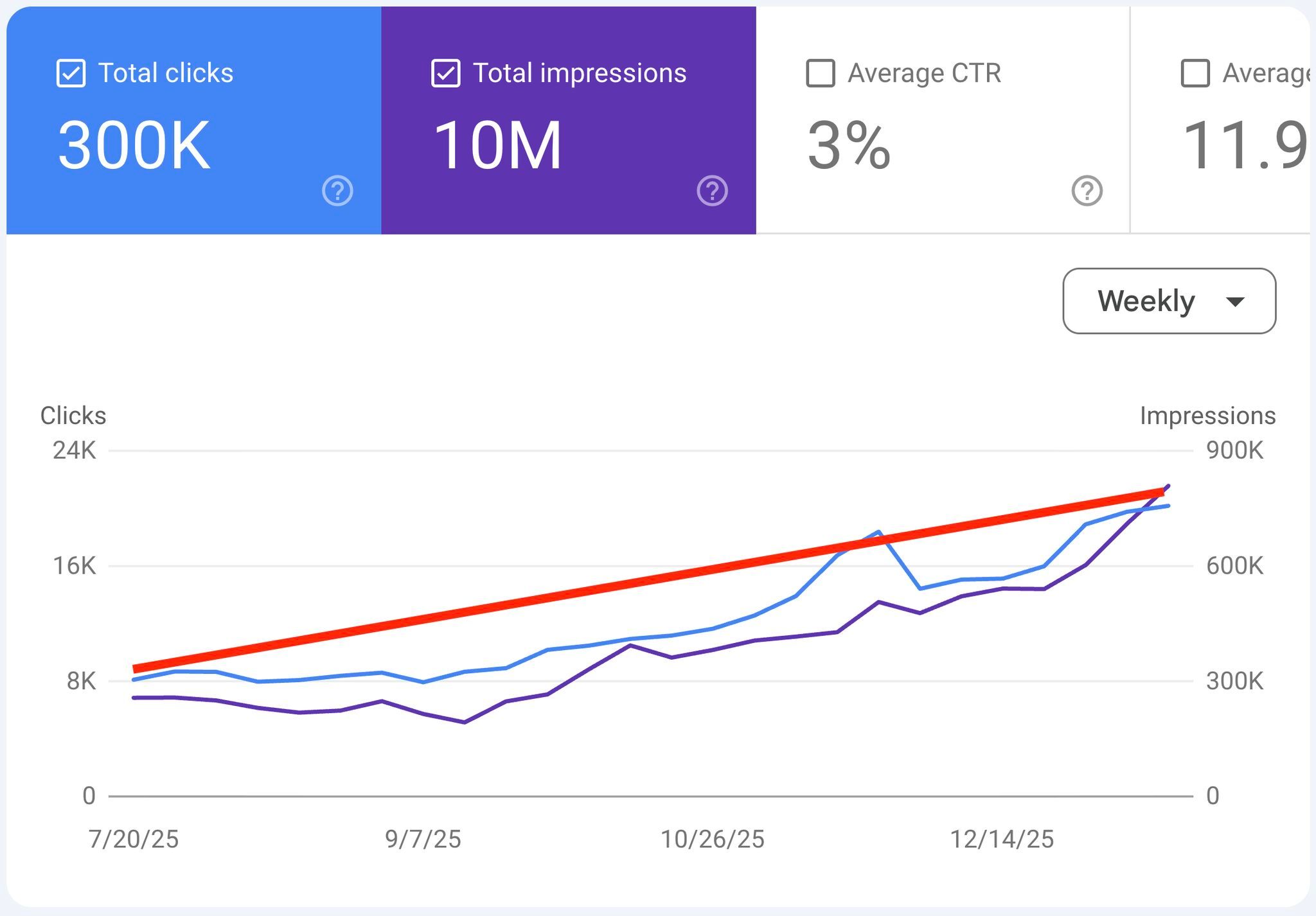

Results

+250% traffic

+76% more revenue

There is a lot to go but it is looking good:

Technical SEO can achieve substantial organic traffic growth

If you want me to check your site for Technical SEO upgrade just reply to this email with your domain.

Note: if you reply to this email it also helps emails systems to understand I’m legit and not spam.

Recommended Reads

Until next time 👋

—